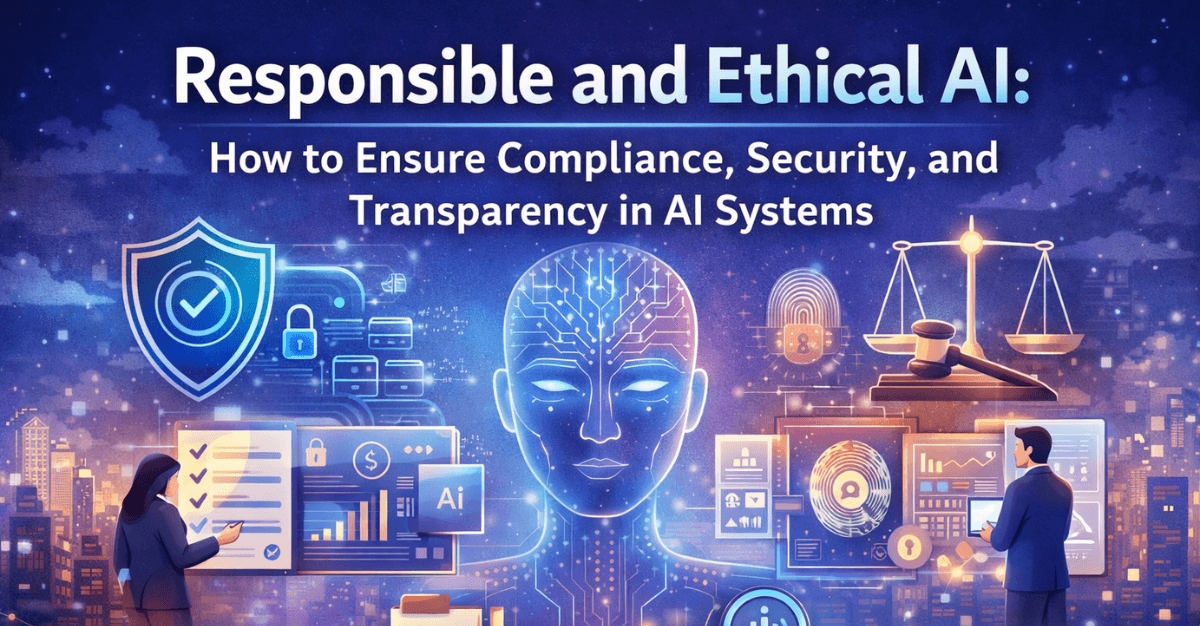

Artificial Intelligence (AI) is no longer a futuristic concept—it is a core driver of innovation across industries. From personalized recommendations and fraud detection to autonomous systems and predictive analytics, AI is transforming how businesses operate. However, with great power comes great responsibility.

As AI systems become more integrated into decision-making processes, concerns around ethics, compliance, security, and transparency are growing rapidly. Organizations must not only build powerful AI systems but also ensure they are responsible, fair, and trustworthy.

This blog explores how modern enterprises can ensure responsible and ethical AI by focusing on compliance, security, and transparency—three pillars that define trustworthy AI systems.

Understanding Responsible and Ethical AI

Responsible AI refers to the development and deployment of AI systems in a manner that is ethical, fair, accountable, and aligned with societal values. Ethical AI ensures that systems do not cause harm, discriminate unfairly, or operate without oversight.

At its core, responsible AI is built on key principles:

- Fairness: Avoiding bias and discrimination

- Accountability: Taking responsibility for AI decisions

- Transparency: Making AI processes understandable

- Privacy: Protecting user data

- Security: Safeguarding systems from threats

Organizations that adopt these principles not only reduce risks but also build trust with users, regulators, and stakeholders.

Why Responsible AI Matters

The consequences of poorly designed AI systems can be severe—biased hiring algorithms, inaccurate medical predictions, or unethical surveillance practices. These issues can lead to reputational damage, legal penalties, and loss of customer trust.

Responsible AI is important because it:

- Ensures compliance with global regulations

- Builds customer trust and brand credibility

- Reduces operational and legal risks

- Promotes fairness and inclusivity

- Enhances long-term sustainability of AI initiatives

In today’s regulatory landscape, responsible AI is not optional—it is a necessity.

Ensuring Compliance in AI Systems

Compliance is one of the most critical aspects of ethical AI. Governments and regulatory bodies worldwide are introducing laws and frameworks to govern AI usage.

1. Understand Regulatory Requirements

Organizations must stay informed about relevant regulations such as:

- Data protection laws (e.g., GDPR-like frameworks)

- Industry-specific regulations (healthcare, finance)

- AI governance guidelines

Compliance begins with understanding which rules apply to your organization and how AI systems interact with them.

2. Implement Data Governance Frameworks

Data is the foundation of AI. Poor data quality or misuse of data can lead to biased and non-compliant systems.

Key practices include:

- Data classification and labeling

- Data minimization

- Consent management

- Data lineage tracking

Strong data governance ensures that AI systems operate within legal and ethical boundaries.

3. Conduct Regular Audits

AI systems should be continuously monitored and audited to ensure compliance.

- Perform bias and fairness audits

- Validate model performance

- Track decision outcomes

Regular audits help identify issues early and ensure ongoing compliance.

4. Maintain Documentation

Transparency in compliance requires proper documentation.

- Model design and training data

- Decision-making logic

- Risk assessments

Well-documented AI systems are easier to audit and explain.

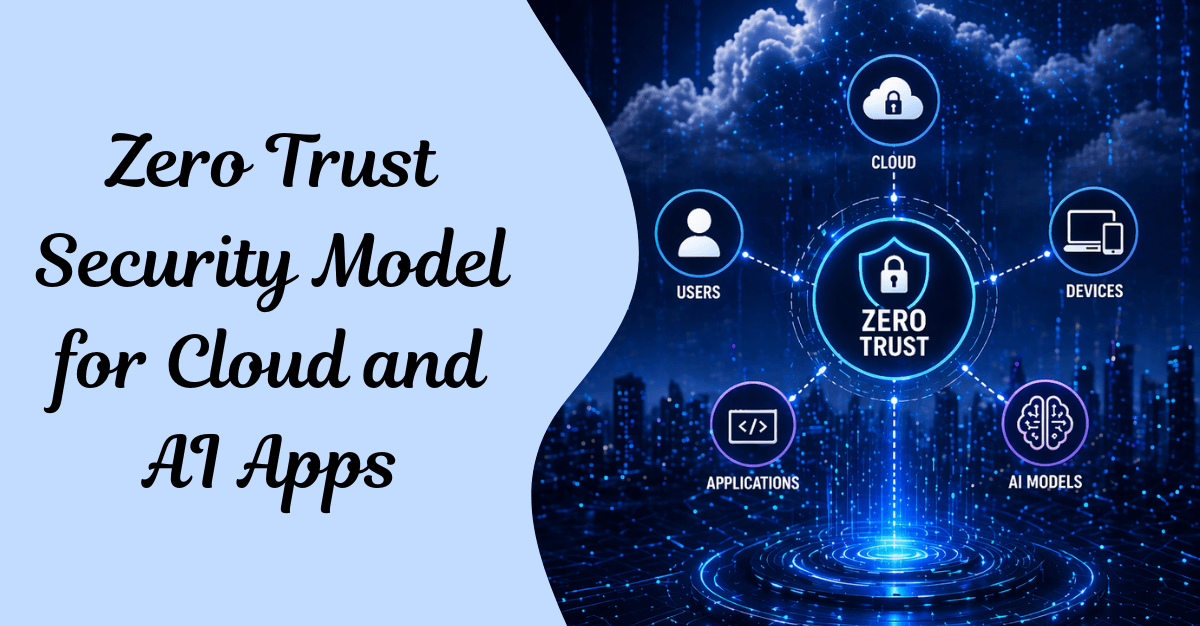

Strengthening Security in AI Systems

AI systems are attractive targets for cyber threats. Ensuring robust security is essential to protect both data and models.

1. Secure Data Pipelines

Data used in AI systems must be protected throughout its lifecycle.

- Use encryption for data storage and transmission

- Implement access controls

- Monitor data usage

2. Protect Against Adversarial Attacks

AI models can be manipulated through adversarial inputs.

- Use adversarial training techniques

- Monitor unusual patterns

- Validate input data

3. Model Security

AI models themselves can be stolen or reverse-engineered.

- Use model encryption

- Implement API security

- Restrict unauthorized access

4. Continuous Monitoring

Security is not a one-time effort.

- Monitor system activity

- Detect anomalies

- Respond to threats in real time

Achieving Transparency in AI

Transparency is essential for building trust in AI systems. Users should understand how decisions are made, especially in critical applications.

1. Explainable AI (XAI)

Explainable AI focuses on making AI decisions understandable.

- Use interpretable models where possible

- Provide explanations for predictions

- Visualize decision processes

2. Clear Communication

Organizations should communicate AI usage openly.

- Inform users when AI is being used

- Explain how data is collected and processed

- Provide opt-out options

3. Model Interpretability

Complex models like deep learning can be difficult to interpret.

- Use tools for model explainability

- Simplify models when possible

- Balance accuracy and interpretability

4. Ethical Disclosure

Transparency also involves ethical responsibility.

- Disclose limitations of AI systems

- Acknowledge potential biases

- Be honest about risks

Addressing Bias and Fairness

Bias in AI is one of the most significant ethical concerns. It can arise from:

- Biased training data

- Flawed algorithms

- Human assumptions

How to Mitigate Bias:

- Use diverse and representative datasets

- Regularly test for bias

- Apply fairness algorithms

Involve diverse teams in development

Ensuring fairness is not just a technical challenge—it is a societal responsibility.

Building an Ethical AI Framework

To ensure consistency, organizations should establish a structured ethical AI framework.

Key Components:

1. Ethical Guidelines

Define principles and values guiding AI development

2. Governance Structure

Assign roles and responsibilities for AI oversight

3. Risk Management

Identify and mitigate potential risks

4. Training and Awareness

Educate teams about ethical AI practices

5. Continuous Improvement

Regularly update policies and practices

Role of Leadership in Responsible AI

Leadership plays a crucial role in driving ethical AI initiatives.

- Set the vision for responsible AI

- Allocate resources for compliance and security

- Promote a culture of accountability

- Encourage cross-functional collaboration

Without strong leadership, ethical AI efforts may lack direction and impact.

Challenges in Implementing Ethical AI

Despite its importance, implementing responsible AI comes with challenges:

1. Lack of Standardization

Different regions have different regulations, making compliance complex.

2. Technical Complexity

Ensuring transparency and fairness in advanced AI models is difficult.

3. Resource Constraints

Building secure and compliant AI systems requires investment.

4. Evolving Regulations

AI regulations are still evolving, requiring constant adaptation.

Future of Responsible AI

The future of AI will be shaped by how responsibly it is developed and deployed.

Emerging Trends:

- AI Regulation Expansion: Governments will introduce stricter laws

- AI Ethics Boards: Organizations will establish dedicated ethics committees

- Automated Compliance Tools: AI will help monitor itself

- Greater Focus on Explainability: Transparency will become a standard requirement

Responsible AI will become a competitive advantage for organizations that prioritize trust and ethics.

Best Practices for Responsible AI

To summarize, here are key best practices:

- Define clear ethical guidelines

- Ensure compliance with regulations

- Implement strong data governance

- Prioritize security at every level

- Use explainable AI techniques

- Continuously monitor and audit systems

- Promote transparency and accountability

Conclusion

Responsible and ethical AI is not just a technological requirement—it is a business imperative. As AI continues to influence critical decisions, organizations must ensure that their systems are compliant, secure, and transparent.

By adopting a proactive approach and embedding ethical principles into every stage of AI development, businesses can build trustworthy systems that benefit both users and society.

In the long run, organizations that prioritize responsible AI will not only mitigate risks but also gain a significant competitive advantage in an increasingly AI-driven world.

Frequently Asked Questions (FAQs)

1. What is responsible AI?

Responsible AI refers to designing, developing, and deploying AI systems in a way that is ethical, transparent, fair, and accountable while minimizing risks and harm.

2. Why is ethical AI important for businesses?

Ethical AI helps businesses build trust, comply with regulations, reduce bias, and avoid legal or reputational risks associated with unfair or unsafe AI systems.

3. What are the key pillars of responsible AI?

The main pillars include fairness, transparency, accountability, privacy, and security.

4. How can organizations ensure AI compliance?

Organizations can ensure compliance by following regulations, implementing data governance, conducting audits, and maintaining proper documentation.

5. What is explainable AI (XAI)?

Explainable AI refers to methods and tools that make AI decisions understandable and interpretable for humans.

6. What are the common risks in AI systems?

Common risks include bias, data privacy violations, lack of transparency, security vulnerabilities, and unintended consequences.

7. How can bias in AI be reduced?

Bias can be reduced by using diverse datasets, testing models regularly, applying fairness techniques, and involving diverse teams.

8. What role does security play in AI systems?

Security protects AI systems from cyber threats, data breaches, and adversarial attacks, ensuring reliability and trust.