AI systems are becoming central to modern businesses—but they also introduce new security risks.

When deployed on cloud platforms, these systems handle sensitive data, expose APIs, and operate at scale. Without proper security, they can become vulnerable to breaches, misuse, and attacks.

This guide explains how to build secure AI systems on cloud platforms, covering key risks, best practices, and practical strategies.

What Is a Secure AI System on Cloud Platforms?

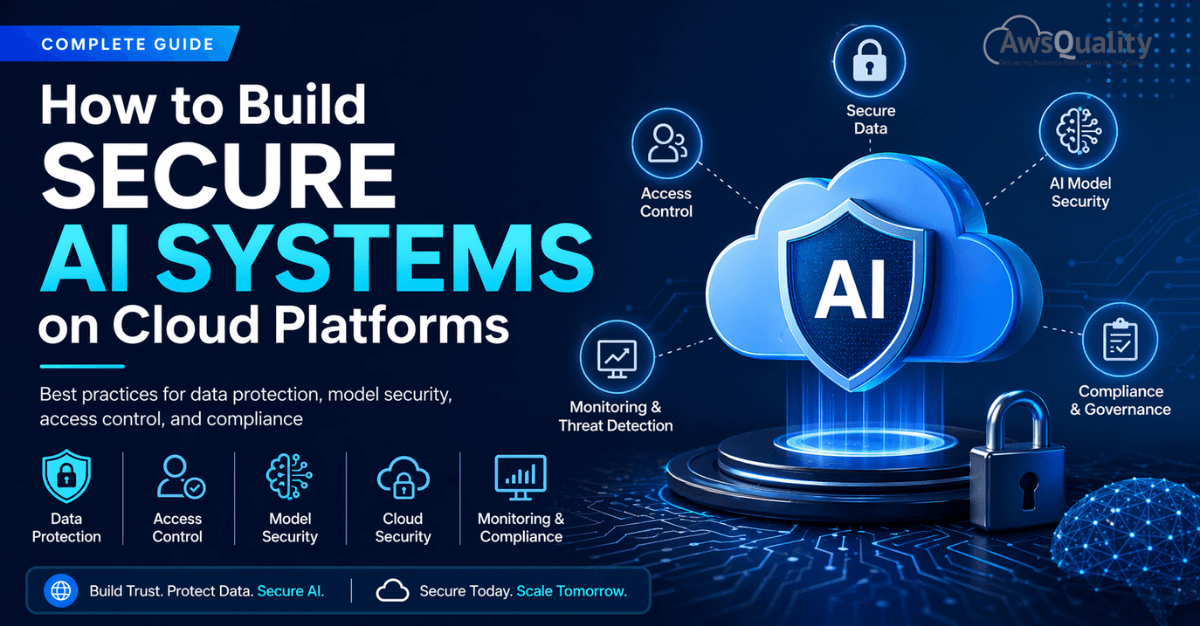

A secure AI system on cloud platforms is an AI solution designed with strong data protection, access control, model security, and continuous monitoring. It ensures that both data and machine learning models remain protected throughout their lifecycle—from training to deployment.

Read: How AI + Cloud Drives Business Growth and Efficiency

How to Build Secure AI Systems on Cloud Platforms

Building secure AI systems requires a layered approach that protects data, models, and infrastructure.

The most effective way to do this is by focusing on a few core areas: data security, access control, model protection, and continuous monitoring.

1. Start with Data Security

Data is the foundation of every AI system—and also its biggest risk.

AI models rely on large volumes of data, often including sensitive customer information. If this data is exposed, the entire system becomes vulnerable.

To secure data, organizations must ensure encryption at every stage—both when data is stored and when it is transmitted. Access to data should be tightly controlled, allowing only authorized users and systems to interact with it.

Another important principle is data minimization. Collect only what is necessary, and avoid storing unnecessary sensitive information. Where possible, anonymize or mask personal data to reduce risk.

👉 Secure data is the first step toward secure AI.

2. Implement Strong Identity and Access Management

Most cloud security failures happen due to misconfigured access controls.

AI systems involve multiple components—data pipelines, training environments, APIs—and each requires controlled access.

A strong identity and access management strategy ensures that users and systems only have access to what they need. Multi-factor authentication adds an extra layer of protection, while regular credential rotation reduces long-term risks.

This approach is often referred to as the principle of least privilege, and it is essential for securing cloud-based AI systems.

3. Secure the Model Training Process

Model training is where AI systems learn—and where vulnerabilities can be introduced.

If training data is compromised, the model itself can become unreliable. This type of attack, known as data poisoning, can alter how the AI behaves.

To prevent this, organizations should validate all data sources and monitor training pipelines for anomalies. Training environments should also be isolated from other systems to reduce exposure.

Maintaining version control of models is equally important. It allows teams to track changes, roll back issues, and ensure that only approved models are deployed.

4. Protect AI Models in Production

Once deployed, AI models are typically exposed through APIs. This makes them accessible—but also introduces new risks.

Unauthorized access, excessive usage, and model extraction are common concerns at this stage.

To secure deployed models, APIs should require authentication and enforce usage limits. Input validation is also critical to prevent malicious data from affecting outputs.

Monitoring API activity helps detect unusual behavior early, allowing teams to respond before issues escalate.

5. Understand AI-Specific Security Risks

AI systems face unique threats that traditional applications do not.

Adversarial attacks involve manipulating inputs to trick models into producing incorrect results. Model inversion attempts to extract sensitive data from trained models. Model theft focuses on replicating the behavior of proprietary AI systems.

These risks highlight the need for defensive strategies such as testing models against edge cases, limiting output exposure, and monitoring usage patterns.

6. Monitor Systems Continuously

Security is not a one-time setup—it’s an ongoing process.

AI systems must be continuously monitored to detect anomalies, unauthorized access, and unusual behavior. Logging user activity, tracking API usage, and analyzing model outputs help identify potential threats early.

This proactive approach allows organizations to respond quickly and minimize impact.

7. Ensure Compliance and Governance

AI systems often operate in regulated environments where data privacy and security are critical.

Organizations must comply with regulations such as GDPR, HIPAA, or industry-specific standards. This requires maintaining audit logs, documenting data usage, and implementing clear governance policies.

Strong governance ensures consistency, accountability, and long-term security.

8. Secure the AI Development Lifecycle (MLOps)

AI systems are continuously evolving, which makes secure development practices essential.

Every stage—from code to deployment—should include security checks. Pipelines must be protected, dependencies should be scanned for vulnerabilities, and environments should be isolated.

This approach, often called secure MLOps, ensures that updates do not introduce new risks into the system.

9. Use Cloud Security Features Effectively

Cloud platforms provide built-in security tools such as identity management, encryption, and threat detection.

However, these tools are only effective if they are properly configured. Many security issues arise from incorrect settings rather than lack of features.

Organizations must actively manage and optimize these tools to fully benefit from them.

10. Build a Security-Aware Culture

Technology alone cannot secure AI systems—people and processes play a critical role.

Human error, lack of awareness, and poor practices are common causes of security incidents. Training teams, defining clear policies, and conducting regular audits help reduce these risks.

Security must be treated as a shared responsibility across the organization.

Key Takeaways

- AI security must be built into every layer of the system

- Data protection is the foundation of secure AI

- Access control reduces unauthorized usage

- AI models require protection from unique threats

- Continuous monitoring is essential for long-term security

Traditional Security vs AI Security

| Aspect | Traditional Systems | AI Systems |

|---|---|---|

| Data Usage | Static | Continuous and evolving |

| Risk Type | Data breaches | Data + model attacks |

| Monitoring | System-focused | Behavior and model-focused |

| Complexity | Moderate | High |

What Are the Biggest Risks in AI Systems?

The biggest risks in AI systems include data breaches, unauthorized access, model manipulation, and adversarial attacks. These risks arise because AI systems rely heavily on data and automated decision-making, making them attractive targets for attackers.

What is MLOps Security?

MLOps security refers to protecting the entire AI lifecycle, including data pipelines, model training, deployment, and monitoring, to ensure systems remain secure and reliable.

Best Practices for Securing AI Systems

- Use least-privilege access

- Encrypt sensitive data

- Validate training data

- Monitor system activity

- Regularly audit and update systems

Summary

Building secure AI systems on cloud platforms requires a combination of data protection, access control, model security, and continuous monitoring.

Organizations that adopt a security-first approach can reduce risks, ensure compliance, and build trustworthy AI systems that scale safely.

Frequently Asked Questions

1. What are secure AI systems?

Secure AI systems are designed with strong data protection, access control, and monitoring to prevent misuse and attacks.

2. Why is AI security important?

AI systems handle sensitive data and automated decisions, making them vulnerable to breaches and manipulation.

3. How can I secure AI models?

You can secure AI models by implementing authentication, monitoring usage, and validating inputs.

4. What are common risks in AI systems?

Common risks include data breaches, model attacks, unauthorized access, and misconfigurations.

5. What is MLOps security?

MLOps security focuses on securing the AI development and deployment lifecycle.